SCENE 1: (What is CiteIt?)

- “Hello, I’m Tim Langeman, founder of CiteIt. I’ve created a writing tool that helps writers overcome the ‘Trilemma’ they encounter when they seek to show readers that journalist Ken Klippenstein calls

the receipts

.

SCENE 2: THE TRILEMMA (The Three Bad Choices)

-

- “Traditional writing tools actually punish you for being thorough. Currently, you’re forced to choose between:

- The Exit Ramp—in which external links send readers into a sea of tabs;

- The Clutter—extra inline text that kills your prose; or

- The Credibility/Understanding Gap—in which writers choose not to bring the receipts at all, inviting shallow understanding or accusations of cherry-picking.”

- “Traditional writing tools actually punish you for being thorough. Currently, you’re forced to choose between:

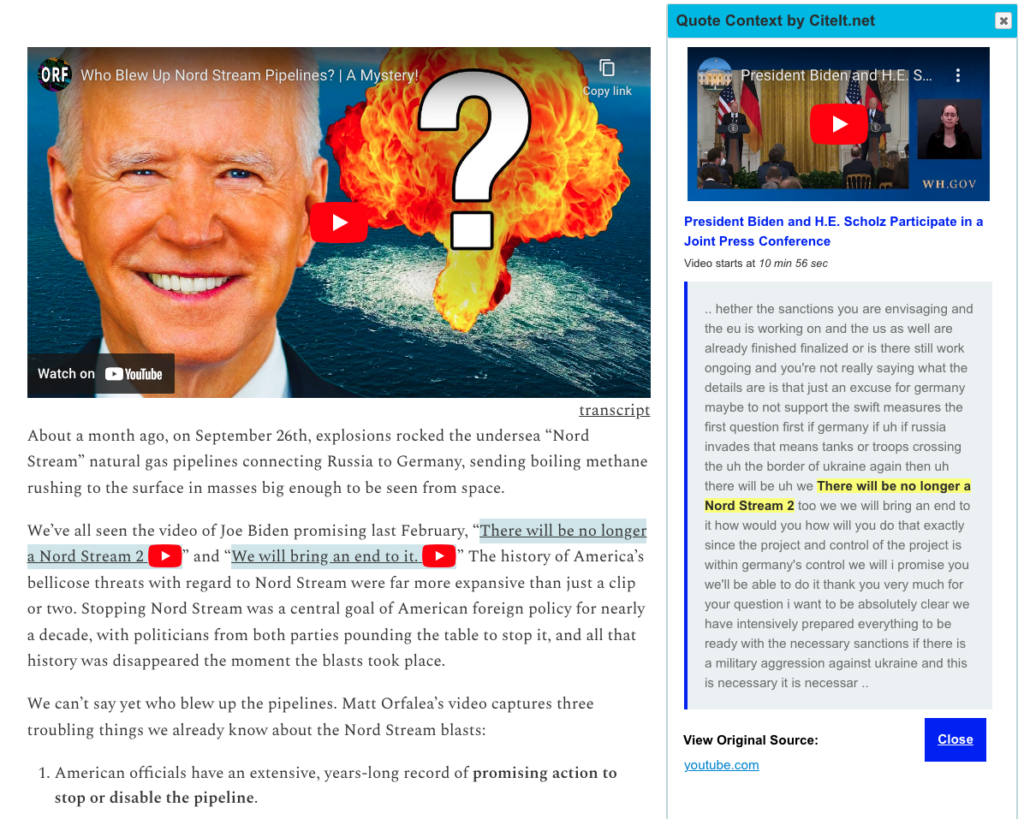

SCENE 3: THE SOLUTION (The Inspection Layer)

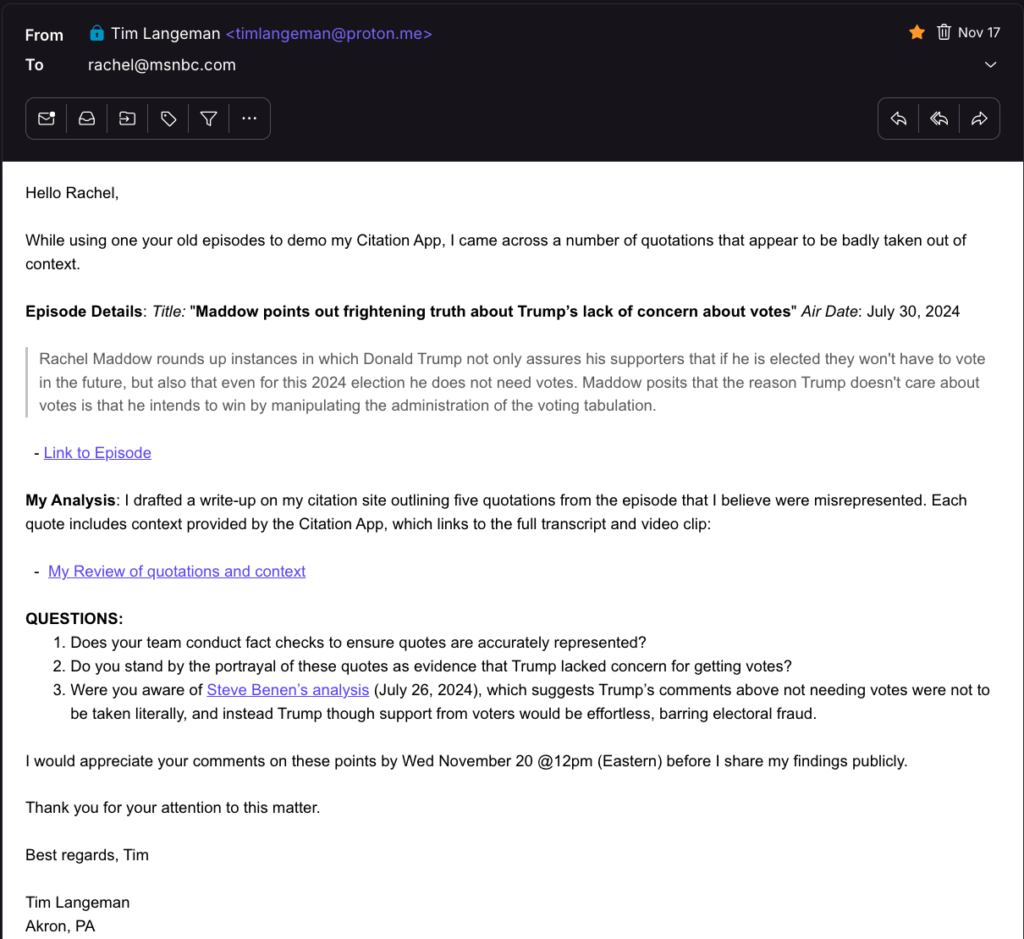

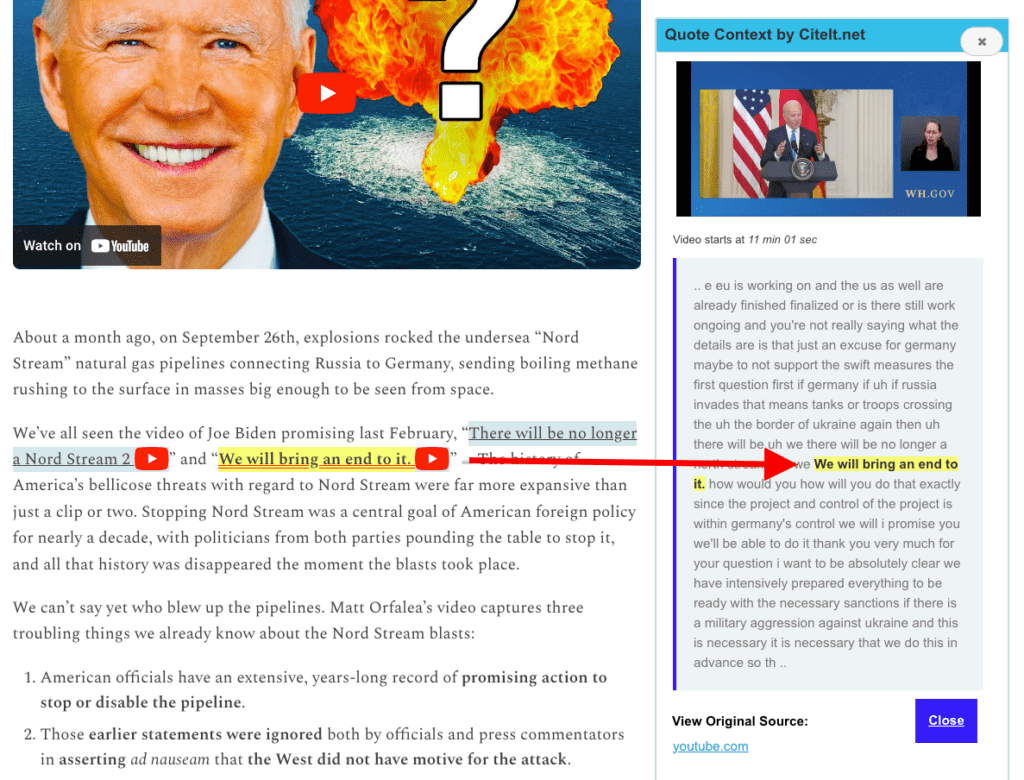

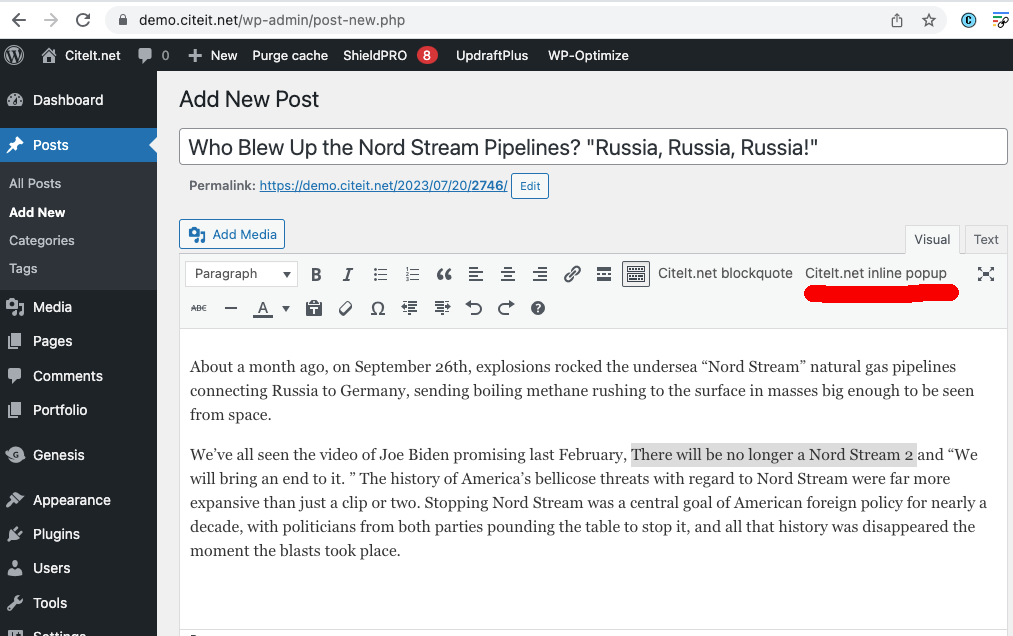

- “CiteIt solves this trilemma with an Inspection Layer. Just as images can expand for a closer look, CiteIt expands quotes to show the surrounding context—pulling in the text before and after a citation. For video, it shows the synced transcript and 30 seconds of footage, keeping readers anchored in your story. When they close the layer, a Visual Wayfinding animation pulses where they left off.

- CiteIt is easy! If you can paste a URL, CiteIt can generate the receipt.”

SCENE 4: THE MISSION (Trust, Understanding)

- “CiteIt’s mission is to build trust and understanding by showing the full context, without penalty. For fans, extra context is a convenience. For the skeptical visitor, it operates like a ‘Proof-of-Work’ certificate—showing the depth of your research and helps convert visitors into subscribers. But it’s not just for hard news; it also offers Cultural Resonance, enabling readers to instantly re-discover memorable movie lines or viral moments.

SCENE 5: THE PITCH & CTA

- “”I created a live WordPress demo at demo.citeit.net, but my goal is to bring the feature to Substack. I’m looking for ten founding writers to simply signal their interest so I can show the Substack Product Lab there is real demand for this feature. If you want to give contextual transparency a chance at becoming a native tool, just reach out by email. No commitment required—just your vote for a future where ‘bringing the receipts’ is a structural advantage, not a penalty.”